Edition 20.0

Abstract

sepolicy Suitesystemd Access ControlMono-spaced Bold

To see the contents of the filemy_next_bestselling_novelin your current working directory, enter thecat my_next_bestselling_novelcommand at the shell prompt and press Enter to execute the command.

Press Enter to execute the command.Press Ctrl+Alt+F2 to switch to a virtual terminal.

mono-spaced bold. For example:

File-related classes includefilesystemfor file systems,filefor files, anddirfor directories. Each class has its own associated set of permissions.

Choose → → from the main menu bar to launch Mouse Preferences. In the Buttons tab, select the Left-handed mouse check box and click to switch the primary mouse button from the left to the right (making the mouse suitable for use in the left hand).To insert a special character into a gedit file, choose → → from the main menu bar. Next, choose → from the Character Map menu bar, type the name of the character in the Search field and click . The character you sought will be highlighted in the Character Table. Double-click this highlighted character to place it in the Text to copy field and then click the button. Now switch back to your document and choose → from the gedit menu bar.

Mono-spaced Bold Italic or Proportional Bold Italic

To connect to a remote machine using ssh, typessh username@domain.nameat a shell prompt. If the remote machine isexample.comand your username on that machine is john, typessh john@example.com.Themount -o remount file-systemcommand remounts the named file system. For example, to remount the/homefile system, the command ismount -o remount /home.To see the version of a currently installed package, use therpm -q packagecommand. It will return a result as follows:package-version-release.

Publican is a DocBook publishing system.

mono-spaced roman and presented thus:

books Desktop documentation drafts mss photos stuff svn books_tests Desktop1 downloads images notes scripts svgs

mono-spaced roman but add syntax highlighting as follows:

package org.jboss.book.jca.ex1;

import javax.naming.InitialContext;

public class ExClient

{

public static void main(String args[])

throws Exception

{

InitialContext iniCtx = new InitialContext();

Object ref = iniCtx.lookup("EchoBean");

EchoHome home = (EchoHome) ref;

Echo echo = home.create();

System.out.println("Created Echo");

System.out.println("Echo.echo('Hello') = " + echo.echo("Hello"));

}

}Note

Important

Warning

Fedora and the component security-guide. The following link automatically loads this information for you: http://bugzilla.redhat.com/.

Summary field.

Description field and give us the details of the error or suggestion as specifically as you can. If possible, include some surrounding text so we know where the error occurs or the suggestion fits.

Document URL: Section number and name: Error or suggestion: Additional information:

Warning

nmap command followed by the hostname or IP address of the machine to scan.

nmap foo.example.comStarting Nmap 4.68 ( http://nmap.org ) Interesting ports on foo.example.com: Not shown: 1710 filtered ports PORT STATE SERVICE 22/tcp open ssh 53/tcp open domain 70/tcp closed gopher 80/tcp open http 113/tcp closed auth

Note

Note

Table 1.1. Common Exploits

| Exploit | Description | Notes | |||

|---|---|---|---|---|---|

| Null or Default Passwords | Leaving administrative passwords blank or using a default password set by the product vendor. This is most common in hardware such as routers and firewalls, though some services that run on Linux can contain default administrator passwords. |

| |||

| Default Shared Keys | Secure services sometimes package default security keys for development or evaluation testing purposes. If these keys are left unchanged and are placed in a production environment on the Internet, all users with the same default keys have access to that shared-key resource, and any sensitive information that it contains. |

| |||

| IP Spoofing | A remote machine acts as a node on your local network, finds vulnerabilities with your servers, and installs a backdoor program or trojan horse to gain control over your network resources. |

| |||

| Eavesdropping | Collecting data that passes between two active nodes on a network by eavesdropping on the connection between the two nodes. |

| |||

| Service Vulnerabilities | An attacker finds a flaw or loophole in a service run over the Internet; through this vulnerability, the attacker compromises the entire system and any data that it may hold, and could possibly compromise other systems on the network. |

| |||

| Application Vulnerabilities | Attackers find faults in desktop and workstation applications (such as e-mail clients) and execute arbitrary code, implant trojan horses for future compromise, or crash systems. Further exploitation can occur if the compromised workstation has administrative privileges on the rest of the network. |

| |||

| Denial of Service (DoS) Attacks | Attacker or group of attackers coordinate against an organization's network or server resources by sending unauthorized packets to the target host (either server, router, or workstation). This forces the resource to become unavailable to legitimate users. |

|

Note

/mnt/cdrom, use the following command to import it into the keyring (a database of trusted keys on the system):

rpm --import /mnt/cdrom/RPM-GPG-KEYrpm -qa gpg-pubkey*gpg-pubkey-db42a60e-37ea5438rpm -qi command followed by the output from the previous command, as in this example:

rpm -qi gpg-pubkey-db42a60e-37ea5438rpm -K /tmp/updates/*.rpmgpg OK. If it doesn't, make sure you are using the correct Fedora public key, as well as verifying the source of the content. Packages that do not pass GPG verifications should not be installed, as they may have been altered by a third party.

rpm -Uvh /tmp/updates/*.rpmrpm -ivh /tmp/updates/<kernel-package>rpm -e <old-kernel-package>Note

Important

Note

glibc, which are used by a number of applications and services. Applications utilizing a shared library typically load the shared code when the application is initialized, so any applications using the updated library must be halted and relaunched.

lsof command as in the following example:

lsof /lib/libwrap.so*tcp_wrappers package is updated.

sshd, vsftpd, and xinetd.

/usr/bin/systemctl command as in the following example:

/usr/bin/systemctl restart <service-name>sshd.

xinetd Servicesxinetd super service only run when a there is an active connection. Examples of services controlled by xinetd include Telnet, IMAP, and POP3.

xinetd each time a new request is received, connections that occur after an upgrade are handled by the updated software. However, if there are active connections at the time the xinetd controlled service is upgraded, they are serviced by the older version of the software.

xinetd controlled service, upgrade the package for the service then halt all processes currently running. To determine if the process is running, use the ps command and then use the kill or killall command to halt current instances of the service.

imap packages are released, upgrade the packages, then type the following command as root into a shell prompt:

ps -aux | grep imapkill <PID>kill -9 <PID>ps command) for an IMAP session.

killall imapd| Encrypt all data transmitted over the network. Encrypting authentication information, such as passwords and cookies, is particularly important. |

| Minimize the amount of software installed and running in order to minimize vulnerability. |

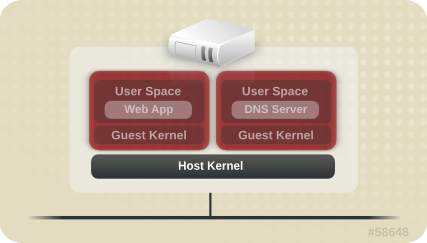

| Use security-enhancing software and tools whenever available (e.g. SELinux and IPTables). |

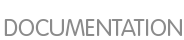

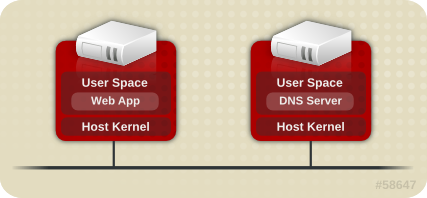

| Run each network service on a separate server whenever possible. This minimizes the risk that a compromise of one service could lead to a compromise of others. |

| Maintain user accounts. Create a good password policy and enforce its use. Delete unused user accounts. |

| Review system and application logs on a routine basis. Send logs to a dedicated, centralized log server. This prevents intruders from easily avoiding detection by modifying the local logs. |

Never log in directly as root, unless absolutely necessary. Administrators should use sudo to execute commands as root when required. The accounts capable of using sudo are specified in /etc/sudoers, which is edited with the visudo utility. By default, relevant logs are written to /var/log/secure. |

cat command.

/etc/passwd file, which makes the system vulnerable to offline password cracking attacks. If an intruder can gain access to the machine as a regular user, he can copy the /etc/passwd file to his own machine and run any number of password cracking programs against it. If there is an insecure password in the file, it is only a matter of time before the password cracker discovers it.

/etc/shadow, which is readable only by the root user.

otrattw,tghwg.

7 for t and the at symbol (@) for a:

o7r@77w,7ghwg.

H.

o7r@77w,7gHwg.

passwd, which is Pluggable Authentication Manager (PAM) aware and therefore checks to see if the password is too short or otherwise easy to crack. This check is performed using the pam_cracklib.so PAM module. Since PAM is customizable, it is possible to add more password integrity checkers, such as pam_passwdqc (available from http://www.openwall.com/passwdqc/) or to write a new module. For a list of available PAM modules, refer to http://www.kernel.org/pub/linux/libs/pam/modules.html. For more information about PAM, refer to Section 3.5, “Pluggable Authentication Modules (PAM)”.

Warning

chage command or the graphical User Manager (system-config-users) application.

-M option of the chage command specifies the maximum number of days the password is valid. For example, to set a user's password to expire in 90 days, use the following command:

chage -M 90 <username>99999 after the -M option (this equates to a little over 273 years).

chage command in interactive mode to modify multiple password aging and account details. Use the following command to enter interactive mode:

chage <username>[root@myServer ~]# chage davido Changing the aging information for davido Enter the new value, or press ENTER for the default Minimum Password Age [0]: 10 Maximum Password Age [99999]: 90 Last Password Change (YYYY-MM-DD) [2006-08-18]: Password Expiration Warning [7]: Password Inactive [-1]: Account Expiration Date (YYYY-MM-DD) [1969-12-31]: [root@myServer ~]#

system-config-users at a shell prompt.

sudo or su. A setuid program is one that operates with the user ID (UID) of the program's owner rather than the user operating the program. Such programs are denoted by an s in the owner section of a long format listing, as in the following example:

-rwsr-xr-x 1 root root 47324 May 1 08:09 /bin/suNote

s may be upper case or lower case. If it appears as upper case, it means that the underlying permission bit has not been set.

pam_console.so, some activities normally reserved only for the root user, such as rebooting and mounting removable media are allowed for the first user that logs in at the physical console (refer to Section 3.5, “Pluggable Authentication Modules (PAM)” for more information about the pam_console.so module.) However, other important system administration tasks, such as altering network settings, configuring a new mouse, or mounting network devices, are not possible without administrative privileges. As a result, system administrators must decide how much access the users on their network should receive.

Table 3.1. Methods of Disabling the Root Account

| Method | Description | Effects | Does Not Affect | |||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Changing the root shell. | Edit the /etc/passwd file and change the shell from /bin/bash to /sbin/nologin. |

|

| |||||||||||||||

| Disabling root access via any console device (tty). | An empty /etc/securetty file prevents root login on any devices attached to the computer. |

|

| |||||||||||||||

| Disabling root SSH logins. | Edit the /etc/ssh/sshd_config file and set the PermitRootLogin parameter to no. |

|

| |||||||||||||||

| Use PAM to limit root access to services. | Edit the file for the target service in the /etc/pam.d/ directory. Make sure the pam_listfile.so is required for authentication.

[a] |

|

| |||||||||||||||

[a]

Refer to Section 3.1.4.2.4, “Disabling Root Using PAM” for details.

| ||||||||||||||||||

/sbin/nologin in the /etc/passwd file. This prevents access to the root account through commands that require a shell, such as the su and the ssh commands.

Important

sudo command, can still access the root account.

/etc/securetty file. This file lists all devices the root user is allowed to log into. If the file does not exist at all, the root user can log in through any communication device on the system, whether via the console or a raw network interface. This is dangerous, because a user can log in to his machine as root via Telnet, which transmits the password in plain text over the network. By default, Fedora's /etc/securetty file only allows the root user to log in at the console physically attached to the machine. To prevent root from logging in, remove the contents of this file by typing the following command:

echo > /etc/securettyWarning

/etc/securetty file does not prevent the root user from logging in remotely using the OpenSSH suite of tools because the console is not opened until after authentication.

/etc/ssh/sshd_config). Change the line that reads:

PermitRootLogin yesPermitRootLogin nokill -HUP `cat /var/run/sshd.pid`/lib/security/pam_listfile.so module, allows great flexibility in denying specific accounts. The administrator can use this module to reference a list of users who are not allowed to log in. Below is an example of how the module is used for the vsftpd FTP server in the /etc/pam.d/vsftpd PAM configuration file (the \ character at the end of the first line in the following example is not necessary if the directive is on one line):

auth required /lib/security/pam_listfile.so item=user \ sense=deny file=/etc/vsftpd.ftpusers onerr=succeed

/etc/vsftpd.ftpusers file and deny access to the service for any listed user. The administrator can change the name of this file, and can keep separate lists for each service or use one central list to deny access to multiple services.

/etc/pam.d/pop and /etc/pam.d/imap for mail clients, or /etc/pam.d/ssh for SSH clients.

su or sudo.

su Commandsu command, they are prompted for the root password and, after authentication, is given a root shell prompt.

su command, the user is the root user and has absolute administrative access to the system

[12]. In addition, once a user has become root, it is possible for them to use the su command to change to any other user on the system without being prompted for a password.

usermod -G wheel <username>wheel group.

system-config-users at a shell prompt.

su (/etc/pam.d/su) in a text editor and remove the comment # from the following line:

auth required /lib/security/$ISA/pam_wheel.so use_uid

wheel can use this program.

Note

wheel group by default.

sudo Commandsudo command offers another approach to giving users administrative access. When trusted users precede an administrative command with sudo, they are prompted for their own password. Then, when they have been authenticated and assuming that the command is permitted, the administrative command is executed as if they were the root user.

sudo command is as follows:

sudo <command>mount.

Important

sudo command should take extra care to log out before walking away from their machines since sudoers can use the command again without being asked for a password within a five minute period. This setting can be altered via the configuration file, /etc/sudoers.

sudo command allows for a high degree of flexibility. For instance, only users listed in the /etc/sudoers configuration file are allowed to use the sudo command and the command is executed in the user's shell, not a root shell. This means the root shell can be completely disabled, as shown in Section 3.1.4.2.1, “Disabling the Root Shell”.

sudo command also provides a comprehensive audit trail. Each successful authentication is logged to the file /var/log/messages and the command issued along with the issuer's user name is logged to the file /var/log/secure.

sudo command is that an administrator can allow different users access to specific commands based on their needs.

sudo configuration file, /etc/sudoers, should use the visudo command.

visudo and add a line similar to the following in the user privilege specification section:

juan ALL=(ALL) ALLjuan, can use sudo from any host and execute any command.

sudo:

%users localhost=/sbin/shutdown -h now/sbin/shutdown -h now as long as it is issued from the console.

sudoers has a detailed listing of options for this file.

Note

Important

cupsd — The default print server for Fedora.

lpd — An alternative print server.

xinetd — A super server that controls connections to a range of subordinate servers, such as gssftp and telnet.

sendmail — The Sendmail Mail Transport Agent (MTA) is enabled by default, but only listens for connections from the localhost.

sshd — The OpenSSH server, which is a secure replacement for Telnet.

cupsd running. The same is true for portmap. If you do not mount NFSv3 volumes or use NIS (the ypbind service), then portmap should be disabled.

netdump, transmit the contents of memory over the network unencrypted. Memory dumps can contain passwords or, even worse, database entries and other sensitive information.

finger and rwhod reveal information about users of the system.

rlogin, rsh, telnet, and vsftpd.

rlogin, rsh, and telnet) should be avoided in favor of SSH. Refer to Section 3.1.7, “Security Enhanced Communication Tools” for more information about sshd.

finger

authd (this was called identd in previous Fedora releases.)

netdump

netdump-server

nfs

rwhod

sendmail

smb (Samba)

yppasswdd

ypserv

ypxfrd

Important

system-config-firewall). This tool creates broad iptables rules for a general-purpose firewall using a control panel interface.

iptables is probably a better option. Refer to Section 3.7, “Using Firewalls” for more information.

telnet and rsh. OpenSSH includes a network service called sshd and three command line client applications:

ssh — A secure remote console access client.

scp — A secure remote copy command.

sftp — A secure pseudo-ftp client that allows interactive file transfer sessions.

Important

sshd service is inherently secure, the service must be kept up-to-date to prevent security threats. Refer to Section 1.5, “Security Updates” for more information.

xinetd, a super server that provides additional access, logging, binding, redirection, and resource utilization control.

Note

xinetd to create redundancy within service access controls. Refer to Section 3.7, “Using Firewalls” for more information about implementing firewalls with iptables commands.

hosts_options man page for information about the TCP Wrapper functionality and control language.

banner option.

vsftpd. To begin, create a banner file. It can be anywhere on the system, but it must have same name as the daemon. For this example, the file is called /etc/banners/vsftpd and contains the following line:

220-Hello, %c 220-All activity on ftp.example.com is logged. 220-Inappropriate use will result in your access privileges being removed.

%c token supplies a variety of client information, such as the username and hostname, or the username and IP address to make the connection even more intimidating.

/etc/hosts.allow file:

vsftpd : ALL : banners /etc/banners/ spawn directive.

/etc/hosts.deny file to deny any connection attempts from that network, and to log the attempts to a special file:

ALL : 206.182.68.0 : spawn /bin/ 'date' %c %d >> /var/log/intruder_alert %d token supplies the name of the service that the attacker was trying to access.

spawn directive in the /etc/hosts.allow file.

Note

spawn directive executes any shell command, it is a good idea to create a special script to notify the administrator or execute a chain of commands in the event that a particular client attempts to connect to the server.

severity option.

emerg flag in the log files instead of the default flag, info, and deny the connection.

/etc/hosts.deny:

in.telnetd : ALL : severity emerg authpriv logging facility, but elevates the priority from the default value of info to emerg, which posts log messages directly to the console.

xinetd to set a trap service and using it to control resource levels available to any given xinetd service. Setting resource limits for services can help thwart Denial of Service (DoS) attacks. Refer to the man pages for xinetd and xinetd.conf for a list of available options.

xinetd is its ability to add hosts to a global no_access list. Hosts on this list are denied subsequent connections to services managed by xinetd for a specified period or until xinetd is restarted. You can do this using the SENSOR attribute. This is an easy way to block hosts attempting to scan the ports on the server.

SENSOR is to choose a service you do not plan on using. For this example, Telnet is used.

/etc/xinetd.d/telnet and change the flags line to read:

flags = SENSOR

deny_time = 30

deny_time attribute are FOREVER, which keeps the ban in effect until xinetd is restarted, and NEVER, which allows the connection and logs it.

disable = no

SENSOR is a good way to detect and stop connections from undesirable hosts, it has two drawbacks:

SENSOR is running can mount a Denial of Service attack against particular hosts by forging their IP addresses and connecting to the forbidden port.

xinetd is its ability to set resource limits for services under its control.

cps = <number_of_connections> <wait_period> — Limits the rate of incoming connections. This directive takes two arguments:

<number_of_connections> — The number of connections per second to handle. If the rate of incoming connections is higher than this, the service is temporarily disabled. The default value is fifty (50).

<wait_period> — The number of seconds to wait before re-enabling the service after it has been disabled. The default interval is ten (10) seconds.

instances = <number_of_connections> — Specifies the total number of connections allowed to a service. This directive accepts either an integer value or UNLIMITED.

per_source = <number_of_connections> — Specifies the number of connections allowed to a service by each host. This directive accepts either an integer value or UNLIMITED.

rlimit_as = <number[K|M]> — Specifies the amount of memory address space the service can occupy in kilobytes or megabytes. This directive accepts either an integer value or UNLIMITED.

rlimit_cpu = <number_of_seconds> — Specifies the amount of time in seconds that a service may occupy the CPU. This directive accepts either an integer value or UNLIMITED.

xinetd service from overwhelming the system, resulting in a denial of service.

portmap service is a dynamic port assignment daemon for RPC services such as NIS and NFS. It has weak authentication mechanisms and has the ability to assign a wide range of ports for the services it controls. For these reasons, it is difficult to secure.

Note

portmap only affects NFSv2 and NFSv3 implementations, since NFSv4 no longer requires it. If you plan to implement an NFSv2 or NFSv3 server, then portmap is required, and the following section applies.

portmap service since it has no built-in form of authentication.

portmap service, it is a good idea to add iptables rules to the server and restrict access to specific networks.

portmap service) from the 192.168.0.0/24 network. The second allows TCP connections to the same port from the localhost. This is necessary for the sgi_fam service used by Nautilus. All other packets are dropped.

iptables -A INPUT -p tcp -s! 192.168.0.0/24 --dport 111 -j DROP iptables -A INPUT -p tcp -s 127.0.0.1 --dport 111 -j ACCEPT

iptables -A INPUT -p udp -s! 192.168.0.0/24 --dport 111 -j DROP

Note

ypserv, which is used in conjunction with portmap and other related services to distribute maps of usernames, passwords, and other sensitive information to any computer claiming to be within its domain.

/usr/sbin/rpc.yppasswdd — Also called the yppasswdd service, this daemon allows users to change their NIS passwords.

/usr/sbin/rpc.ypxfrd — Also called the ypxfrd service, this daemon is responsible for NIS map transfers over the network.

/usr/sbin/yppush — This application propagates changed NIS databases to multiple NIS servers.

/usr/sbin/ypserv — This is the NIS server daemon.

portmap service as outlined in Section 3.2.2, “Securing Portmap”, then address the following issues, such as network planning.

/etc/passwd map:

ypcat -d <NIS_domain> -h <DNS_hostname> passwd

/etc/shadow file by typing the following command:

ypcat -d <NIS_domain> -h <DNS_hostname> shadow

Note

/etc/shadow file is not stored within an NIS map.

o7hfawtgmhwg.domain.com. Similarly, create a different randomized NIS domain name. This makes it much more difficult for an attacker to access the NIS server.

/var/yp/securenets File/var/yp/securenets file is blank or does not exist (as is the case after a default installation), NIS listens to all networks. One of the first things to do is to put netmask/network pairs in the file so that ypserv only responds to requests from the appropriate network.

/var/yp/securenets file:

255.255.255.0 192.168.0.0

Warning

/var/yp/securenets file.

rpc.yppasswdd — the daemon that allows users to change their login passwords. Assigning ports to the other two NIS server daemons, rpc.ypxfrd and ypserv, allows for the creation of firewall rules to further protect the NIS server daemons from intruders.

/etc/sysconfig/network:

YPSERV_ARGS="-p 834" YPXFRD_ARGS="-p 835"

iptables -A INPUT -p ALL -s! 192.168.0.0/24 --dport 834 -j DROP iptables -A INPUT -p ALL -s! 192.168.0.0/24 --dport 835 -j DROP

Note

/etc/shadow map is sent over the network. If an intruder gains access to an NIS domain and sniffs network traffic, they can collect usernames and password hashes. With enough time, a password cracking program can guess weak passwords, and an attacker can gain access to a valid account on the network.

Important

portmap service as outlined in Section 3.2.2, “Securing Portmap”. NFS traffic now utilizes TCP in all versions, rather than UDP, and requires it when using NFSv4. NFSv4 now includes Kerberos user and group authentication, as part of the RPCSEC_GSS kernel module. Information on portmap is still included, since Fedora supports NFSv2 and NFSv3, both of which utilize portmap.

/etc/exports file. Be careful not to add extraneous spaces when editing this file.

/etc/exports file shares the directory /tmp/nfs/ to the host bob.example.com with read/write permissions.

/tmp/nfs/ bob.example.com(rw)

/etc/exports file, on the other hand, shares the same directory to the host bob.example.com with read-only permissions and shares it to the world with read/write permissions due to a single space character after the hostname.

/tmp/nfs/ bob.example.com (rw)

showmount command to verify what is being shared:

showmount -e <hostname>

no_root_squash Optionnfsnobody user, an unprivileged user account. This changes the owner of all root-created files to nfsnobody, which prevents uploading of programs with the setuid bit set.

no_root_squash is used, remote root users are able to change any file on the shared file system and leave applications infected by trojans for other users to inadvertently execute.

MOUNTD_PORT — TCP and UDP port for mountd (rpc.mountd)

STATD_PORT — TCP and UDP port for status (rpc.statd)

LOCKD_TCPPORT — TCP port for nlockmgr (rpc.lockd)

LOCKD_UDPPORT — UDP port nlockmgr (rpc.lockd)

rpcinfo -p command on the NFS server to see which ports and RPC programs are being used.

chown root <directory_name>

chmod 755 <directory_name>

/etc/httpd/conf/httpd.conf):

FollowSymLinks/.

IndexesUserDirUserDir directive is disabled by default because it can confirm the presence of a user account on the system. To enable user directory browsing on the server, use the following directives:

UserDir enabled UserDir disabled root

/root/. To add users to the list of disabled accounts, add a space-delimited list of users on the UserDir disabled line.

Important

IncludesNoExec directive. By default, the Server-Side Includes (SSI) module cannot execute commands. It is recommended that you do not change this setting unless absolutely necessary, as it could, potentially, enable an attacker to execute commands on the system.

gssftpd — A Kerberos-aware xinetd-based FTP daemon that does not transmit authentication information over the network.

tux) — A kernel-space Web server with FTP capabilities.

vsftpd — A standalone, security oriented implementation of the FTP service.

vsftpd FTP service.

vsftpd, add the following directive to the /etc/vsftpd/vsftpd.conf file:

ftpd_banner=<insert_greeting_here>

/etc/banners/. The banner file for FTP connections in this example is /etc/banners/ftp.msg. Below is an example of what such a file may look like:

######### # Hello, all activity on ftp.example.com is logged. #########

Note

220 as specified in Section 3.2.1.1.1, “TCP Wrappers and Connection Banners”.

vsftpd, add the following directive to the /etc/vsftpd/vsftpd.conf file:

banner_file=/etc/banners/ftp.msg

/var/ftp/ directory activates the anonymous account.

vsftpd package. This package establishes a directory tree for anonymous users and configures the permissions on directories to read-only for anonymous users.

Warning

/var/ftp/pub/.

mkdir /var/ftp/pub/upload

chmod 730 /var/ftp/pub/upload

drwx-wx--- 2 root ftp 4096 Feb 13 20:05 upload

Warning

vsftpd, add the following line to the /etc/vsftpd/vsftpd.conf file:

anon_upload_enable=YES

vsftpd, add the following directive to /etc/vsftpd/vsftpd.conf:

local_enable=NO

sudo privileges, the easiest way is to use a PAM list file as described in Section 3.1.4.2.4, “Disabling Root Using PAM”. The PAM configuration file for vsftpd is /etc/pam.d/vsftpd.

vsftpd, add the username to /etc/vsftpd.ftpusers

/etc/mail/sendmail.mc, the effectiveness of such attacks is limited.

confCONNECTION_RATE_THROTTLE — The number of connections the server can receive per second. By default, Sendmail does not limit the number of connections. If a limit is set and reached, further connections are delayed.

confMAX_DAEMON_CHILDREN — The maximum number of child processes that can be spawned by the server. By default, Sendmail does not assign a limit to the number of child processes. If a limit is set and reached, further connections are delayed.

confMIN_FREE_BLOCKS — The minimum number of free blocks which must be available for the server to accept mail. The default is 100 blocks.

confMAX_HEADERS_LENGTH — The maximum acceptable size (in bytes) for a message header.

confMAX_MESSAGE_SIZE — The maximum acceptable size (in bytes) for a single message.

/var/spool/mail/, on an NFS shared volume.

Note

SECRPC_GSS kernel module does not utilize UID-based authentication. However, it is still considered good practice not to put the mail spool directory on NFS shared volumes.

/etc/passwd file should be set to /sbin/nologin (with the possible exception of the root user).

netstat -an or lsof -i. This method is less reliable since these programs do not connect to the machine from the network, but rather check to see what is running on the system. For this reason, these applications are frequent targets for replacement by attackers. Crackers attempt to cover their tracks if they open unauthorized network ports by replacing netstat and lsof with their own, modified versions.

nmap.

nmap -sT -O localhost

Starting Nmap 4.68 ( http://nmap.org ) at 2009-03-06 12:08 EST Interesting ports on localhost.localdomain (127.0.0.1): Not shown: 1711 closed ports PORT STATE SERVICE 22/tcp open ssh 25/tcp open smtp 111/tcp open rpcbind 113/tcp open auth 631/tcp open ipp 834/tcp open unknown 2601/tcp open zebra 32774/tcp open sometimes-rpc11 Device type: general purpose Running: Linux 2.6.X OS details: Linux 2.6.17 - 2.6.24 Uptime: 4.122 days (since Mon Mar 2 09:12:31 2009) Network Distance: 0 hops OS detection performed. Please report any incorrect results at http://nmap.org/submit/ . Nmap done: 1 IP address (1 host up) scanned in 1.420 seconds

portmap due to the presence of the sunrpc service. However, there is also a mystery service on port 834. To check if the port is associated with the official list of known services, type:

cat /etc/services | grep 834

netstat or lsof. To check for port 834 using netstat, use the following command:

netstat -anp | grep 834

tcp 0 0 0.0.0.0:834 0.0.0.0:* LISTEN 653/ypbind

netstat is reassuring because a cracker opening a port surreptitiously on a hacked system is not likely to allow it to be revealed through this command. Also, the [p] option reveals the process ID (PID) of the service that opened the port. In this case, the open port belongs to ypbind (NIS), which is an RPC service handled in conjunction with the portmap service.

lsof command reveals similar information to netstat since it is also capable of linking open ports to services:

lsof -i | grep 834

ypbind 653 0 7u IPv4 1319 TCP *:834 (LISTEN) ypbind 655 0 7u IPv4 1319 TCP *:834 (LISTEN) ypbind 656 0 7u IPv4 1319 TCP *:834 (LISTEN) ypbind 657 0 7u IPv4 1319 TCP *:834 (LISTEN)

lsof, netstat, nmap, and services for more information.

Note

nss-tools package loaded.

certutil -A -d /etc/pki/nssdb -n "root ca cert" -t "CT,C,C" -i ./ca_cert_in_base64_format.crt

/etc/pam_pkcs11/pam_pkcs11.conf file, and locate the following line:

enable_ocsp = false;

enable_ocsp = true;

/etc/pam_pkcs11/cn_map.

cn_map file:

pklogin_finder debug

pklogin_finder tool in debug mode while an enrolled smart card is plugged in, it attempts to output information about the validity of certificates, and if it is successful in attempting to map a login ID from the certificates that are on the card.

Note

about:config to display the list of current configuration options.

negotiate to restrict the list of options.

Note

kinit to retrieve Kerberos tickets. To display the list of available tickets, type klist. The following shows an example output from these commands:

[user@host ~] $ kinit

Password for user@EXAMPLE.COM:

[user@host ~] $ klist

Ticket cache: FILE:/tmp/krb5cc_10920

Default principal: user@EXAMPLE.COM

Valid starting Expires Service principal

10/26/06 23:47:54 10/27/06 09:47:54 krbtgt/USER.COM@USER.COM

renew until 10/26/06 23:47:54

Kerberos 4 ticket cache: /tmp/tkt10920

klist: You have no tickets cachedexport NSPR_LOG_MODULES=negotiateauth:5 export NSPR_LOG_FILE=/tmp/moz.log

/tmp/moz.log, and may give a clue to the problem. For example:

-1208550944[90039d0]: entering nsNegotiateAuth::GetNextToken() -1208550944[90039d0]: gss_init_sec_context() failed: Miscellaneous failure No credentials cache found

kinit.

kinit successfully from your machine but you are unable to authenticate, you might see something like this in the log file:

-1208994096[8d683d8]: entering nsAuthGSSAPI::GetNextToken() -1208994096[8d683d8]: gss_init_sec_context() failed: Miscellaneous failure Server not found in Kerberos database

/etc/krb5.conf file. For example:

.example.com = EXAMPLE.COM example.com = EXAMPLE.COM

yum install pam_yubico

/etc/pam.d/gdm-password and locate the following line:

auth substack password-auth

auth sufficient pam_yubico.so id=16

/etc/yubikey_mapping or by individual user in ~/.yubico/authorized_yubikeys. The following is the syntax:

username:yubikey_token:another_yubikey_token

Note

/etc/pam.d/ directory contains the PAM configuration files for each PAM-aware application. In earlier versions of PAM, the /etc/pam.conf file was used, but this file is now deprecated and is only used if the /etc/pam.d/ directory does not exist.

/etc/pam.d/ directory. Each file in this directory has the same name as the service to which it controls access.

/etc/pam.d/ directory. For example, the login program defines its service name as login and installs the /etc/pam.d/login PAM configuration file.

<module interface> <control flag> <module name> <module arguments>

auth — This module interface authenticates use. For example, it requests and verifies the validity of a password. Modules with this interface can also set credentials, such as group memberships or Kerberos tickets.

account — This module interface verifies that access is allowed. For example, it may check if a user account has expired or if a user is allowed to log in at a particular time of day.

password — This module interface is used for changing user passwords.

session — This module interface configures and manages user sessions. Modules with this interface can also perform additional tasks that are needed to allow access, like mounting a user's home directory and making the user's mailbox available.

Note

pam_unix.so provides all four module interfaces.

auth required pam_unix.so

pam_unix.so module's auth interface.

reboot command normally uses several stacked modules, as seen in its PAM configuration file:

[root@MyServer ~]# cat /etc/pam.d/reboot #%PAM-1.0 auth sufficient pam_rootok.so auth required pam_console.so #auth include system-auth account required pam_permit.so

auth sufficient pam_rootok.so — This line uses the pam_rootok.so module to check whether the current user is root, by verifying that their UID is 0. If this test succeeds, no other modules are consulted and the command is executed. If this test fails, the next module is consulted.

auth required pam_console.so — This line uses the pam_console.so module to attempt to authenticate the user. If this user is already logged in at the console, pam_console.so checks whether there is a file in the /etc/security/console.apps/ directory with the same name as the service name (reboot). If such a file exists, authentication succeeds and control is passed to the next module.

#auth include system-auth — This line is commented and is not processed.

account required pam_permit.so — This line uses the pam_permit.so module to allow the root user or anyone logged in at the console to reboot the system.

required — The module result must be successful for authentication to continue. If the test fails at this point, the user is not notified until the results of all module tests that reference that interface are complete.

requisite — The module result must be successful for authentication to continue. However, if a test fails at this point, the user is notified immediately with a message reflecting the first failed required or requisite module test.

sufficient — The module result is ignored if it fails. However, if the result of a module flagged sufficient is successful and no previous modules flagged required have failed, then no other results are required and the user is authenticated to the service.

optional — The module result is ignored. A module flagged as optional only becomes necessary for successful authentication when no other modules reference the interface.

Important

required modules are called is not critical. Only the sufficient and requisite control flags cause order to become important.

pam.d man page, and the PAM documentation, located in the /usr/share/doc/pam/ directory, describe this newer syntax in detail.

/lib64/security/ directory, the directory name is omitted because the application is linked to the appropriate version of libpam, which can locate the correct version of the module.

pam_userdb.so module uses information stored in a Berkeley DB file to authenticate the user. Berkeley DB is an open source database system embedded in many applications. The module takes a db argument so that Berkeley DB knows which database to use for the requested service.

pam_userdb.so line in a PAM configuration. The <path-to-file> is the full path to the Berkeley DB database file:

auth required pam_userdb.so db=<path-to-file>

/var/log/secure file.

#%PAM-1.0 auth required pam_securetty.so auth required pam_unix.so nullok auth required pam_nologin.so account required pam_unix.so password required pam_cracklib.so retry=3 password required pam_unix.so shadow nullok use_authtok session required pam_unix.so

#) at the beginning of the line.

auth required pam_securetty.so — This module ensures that if the user is trying to log in as root, the tty on which the user is logging in is listed in the /etc/securetty file, if that file exists.

Login incorrect message.

auth required pam_unix.so nullok — This module prompts the user for a password and then checks the password using the information stored in /etc/passwd and, if it exists, /etc/shadow.

nullok instructs the pam_unix.so module to allow a blank password.

auth required pam_nologin.so — This is the final authentication step. It checks whether the /etc/nologin file exists. If it exists and the user is not root, authentication fails.

Note

auth modules are checked, even if the first auth module fails. This prevents the user from knowing at what stage their authentication failed. Such knowledge in the hands of an attacker could allow them to more easily deduce how to crack the system.

account required pam_unix.so — This module performs any necessary account verification. For example, if shadow passwords have been enabled, the account interface of the pam_unix.so module checks to see if the account has expired or if the user has not changed the password within the allowed grace period.

password required pam_cracklib.so retry=3 — If a password has expired, the password component of the pam_cracklib.so module prompts for a new password. It then tests the newly created password to see whether it can easily be determined by a dictionary-based password cracking program.

retry=3 specifies that if the test fails the first time, the user has two more chances to create a strong password.

password required pam_unix.so shadow nullok use_authtok — This line specifies that if the program changes the user's password, it should use the password interface of the pam_unix.so module to do so.

shadow instructs the module to create shadow passwords when updating a user's password.

nullok instructs the module to allow the user to change their password from a blank password, otherwise a null password is treated as an account lock.

use_authtok, provides a good example of the importance of order when stacking PAM modules. This argument instructs the module not to prompt the user for a new password. Instead, it accepts any password that was recorded by a previous password module. In this way, all new passwords must pass the pam_cracklib.so test for secure passwords before being accepted.

session required pam_unix.so — The final line instructs the session interface of the pam_unix.so module to manage the session. This module logs the user name and the service type to /var/log/secure at the beginning and end of each session. This module can be supplemented by stacking it with other session modules for additional functionality.

/usr/share/doc/pam/ directory.

pam_timestamp.so module. It is important to understand how this mechanism works, because a user who walks away from a terminal while pam_timestamp.so is in effect leaves the machine open to manipulation by anyone with physical access to the console.

pam_timestamp.so module creates a timestamp file. By default, this is created in the /var/run/sudo/ directory. If the timestamp file already exists, graphical administrative programs do not prompt for a password. Instead, the pam_timestamp.so module freshens the timestamp file, reserving an extra five minutes of unchallenged administrative access for the user.

/var/run/sudo/<user> file. For the desktop, the relevant file is unknown:root. If it is present and its timestamp is less than five minutes old, the credentials are valid.

ssh, use the /sbin/pam_timestamp_check -k root command to destroy the timestamp file.

/sbin/pam_timestamp_check -k root command from the same terminal window from which you launched the privileged application.

pam_timestamp.so module in order to use the /sbin/pam_timestamp_check -k command. Do not log in as root to use this command.

/sbin/pam_timestamp_check -k root </dev/null >/dev/null 2>/dev/null

pam_timestamp_check man page for more information about destroying the timestamp file using pam_timestamp_check.

pam_timestamp.so module accepts several directives. The following are the two most commonly used options:

timestamp_timeout — Specifies the period (in seconds) for which the timestamp file is valid. The default value is 300 (five minutes).

timestampdir — Specifies the directory in which the timestamp file is stored. The default value is /var/run/sudo/.

pam_console.so.

pam_console.so module is called by login or the graphical login programs, gdm, kdm, and xdm. If this user is the first user to log in at the physical console — referred to as the console user — the module grants the user ownership of a variety of devices normally owned by root. The console user owns these devices until the last local session for that user ends. After this user has logged out, ownership of the devices reverts back to the root user.

pam_console.so by editing the following files:

/etc/security/console.perms

/etc/security/console.perms.d/50-default.perms

50-default.perms file, you should create a new file (for example, xx-name.perms) and enter the required modifications. The name of the new default file must begin with a number higher than 50 (for example, 51-default.perms). This will override the defaults in the 50-default.perms file.

Warning

<console> and <xconsole> directives in the /etc/security/console.perms to the following values:

<console>=tty[0-9][0-9]* vc/[0-9][0-9]* :0\.[0-9] :0 <xconsole>=:0\.[0-9] :0

<xconsole> directive entirely and change the <console> directive to the following value:

<console>=tty[0-9][0-9]* vc/[0-9][0-9]*

/etc/security/console.apps/ directory.

/sbin and /usr/sbin.

/sbin/halt

/sbin/reboot

/sbin/poweroff

pam_console.so module as a requirement for use.

pam — Good introductory information on PAM, including the structure and purpose of the PAM configuration files.

/etc/pam.conf and individual configuration files in the /etc/pam.d/ directory. By default, Fedora uses the individual configuration files in the /etc/pam.d/ directory, ignoring /etc/pam.conf even if it exists.

pam_console — Describes the purpose of the pam_console.so module. It also describes the appropriate syntax for an entry within a PAM configuration file.

console.apps — Describes the format and options available in the /etc/security/console.apps configuration file, which defines which applications are accessible by the console user assigned by PAM.

console.perms — Describes the format and options available in the /etc/security/console.perms configuration file, which specifies the console user permissions assigned by PAM.

pam_timestamp — Describes the pam_timestamp.so module.

/usr/share/doc/pam — Contains a System Administrators' Guide, a Module Writers' Manual, and the Application Developers' Manual, as well as a copy of the PAM standard, DCE-RFC 86.0.

/usr/share/doc/pam/txts/README.pam_timestamp — Contains information about the pam_timestamp.so PAM module.

Note

/etc/passwd or /etc/shadow, to a Kerberos password database can be tedious, as there is no automated mechanism to perform this task. Refer to Question 2.23 in the online Kerberos FAQ:

kinit. The default keytab file is /etc/krb5.keytab. The KDC administration server, /usr/kerberos/sbin/kadmind, is the only service that uses any other file (it uses /var/kerberos/krb5kdc/kadm5.keytab).

kinit command allows a principal who has already logged in to obtain and cache the initial ticket-granting ticket (TGT). Refer to the kinit man page for more information.

root[/instance]@REALM. For a typical user, the root is the same as their login ID. The instance is optional. If the principal has an instance, it is separated from the root with a forward slash ("/"). An empty string ("") is considered a valid instance (which differs from the default NULL instance), but using it can be confusing. All principals in a realm have their own key, which for users is derived from a password or is randomly set for services.

kinit program after the user logs in.

kinit program on the client then decrypts the TGT using the user's key, which it computes from the user's password. The user's key is used only on the client machine and is not transmitted over the network.

Warning

Note

ntpd. Refer to /usr/share/doc/ntp/index.html for details on setting up Network Time Protocol servers.

/usr/share/doc/krb5-server for more information.

pam_krb5 module (provided in the pam_krb5 package) is installed. The pam_krb5 package contains sample configuration files that allow services such as login and gdm to authenticate users as well as obtain initial credentials using their passwords. If access to network servers is always performed using Kerberos-aware services or services that use GSS-API, such as IMAP, the network can be considered reasonably safe.

Important

ntp package for this purpose. Refer to /usr/share/doc/ntp/index.html for details about how to set up Network Time Protocol servers, and http://www.ntp.org for more information about NTP.

krb5-libs, krb5-server, and krb5-workstation packages on the dedicated machine which runs the KDC. This machine needs to be very secure — if possible, it should not run any services other than the KDC.

/etc/krb5.conf and /var/kerberos/krb5kdc/kdc.conf configuration files to reflect the realm name and domain-to-realm mappings. A simple realm can be constructed by replacing instances of EXAMPLE.COM and example.com with the correct domain name — being certain to keep uppercase and lowercase names in the correct format — and by changing the KDC from kerberos.example.com to the name of the Kerberos server. By convention, all realm names are uppercase and all DNS hostnames and domain names are lowercase. For full details about the formats of these configuration files, refer to their respective man pages.

kdb5_util utility from a shell prompt:

/usr/sbin/kdb5_util create -s

create command creates the database that stores keys for the Kerberos realm. The -s switch forces creation of a stash file in which the master server key is stored. If no stash file is present from which to read the key, the Kerberos server (krb5kdc) prompts the user for the master server password (which can be used to regenerate the key) every time it starts.

/var/kerberos/krb5kdc/kadm5.acl file. This file is used by kadmind to determine which principals have administrative access to the Kerberos database and their level of access. Most organizations can get by with a single line:

*/admin@EXAMPLE.COM *

kadmind has been started on the server, any user can access its services by running kadmin on any of the clients or servers in the realm. However, only users listed in the kadm5.acl file can modify the database in any way, except for changing their own passwords.

Note

kadmin utility communicates with the kadmind server over the network, and uses Kerberos to handle authentication. Consequently, the first principal must already exist before connecting to the server over the network to administer it. Create the first principal with the kadmin.local command, which is specifically designed to be used on the same host as the KDC and does not use Kerberos for authentication.

kadmin.local command at the KDC terminal to create the first principal:

/usr/kerberos/sbin/kadmin.local -q "addprinc username/admin"

/sbin/service krb5kdc start /sbin/service kadmin start

addprinc command within kadmin. kadmin and kadmin.local are command line interfaces to the KDC. As such, many commands — such as addprinc — are available after launching the kadmin program. Refer to the kadmin man page for more information.

kinit to obtain a ticket and store it in a credential cache file. Next, use klist to view the list of credentials in the cache and use kdestroy to destroy the cache and the credentials it contains.

Note

kinit attempts to authenticate using the same system login username. If that username does not correspond to a principal in the Kerberos database, kinit issues an error message. If that happens, supply kinit with the name of the correct principal as an argument on the command line (kinit <principal>).

krb5.conf configuration file. While ssh and slogin are the preferred method of remotely logging in to client systems, Kerberized versions of rsh and rlogin are still available, though deploying them requires that a few more configuration changes be made.

krb5-libs and krb5-workstation packages on all of the client machines. Supply a valid /etc/krb5.conf file for each client (usually this can be the same krb5.conf file used by the KDC).

ssh or Kerberized rsh or rlogin, it must have its own host principal in the Kerberos database. The sshd, kshd, and klogind server programs all need access to the keys for the host service's principal. Additionally, in order to use the kerberized rsh and rlogin services, that workstation must have the xinetd package installed.

kadmin, add a host principal for the workstation on the KDC. The instance in this case is the hostname of the workstation. Use the -randkey option for the kadmin's addprinc command to create the principal and assign it a random key:

addprinc -randkey host/blah.example.com

kadmin on the workstation itself, and using the ktadd command within kadmin:

ktadd -k /etc/krb5.keytab host/blah.example.com

ssh — OpenSSH uses GSS-API to authenticate users to servers if the client's and server's configuration both have GSSAPIAuthentication enabled. If the client also has GSSAPIDelegateCredentials enabled, the user's credentials are made available on the remote system.

rsh and rlogin — To use the kerberized versions of rsh and rlogin, enable klogin, eklogin, and kshell.

krb5-telnet must be enabled.

ftp. Be certain to set the instance to the fully qualified hostname of the FTP server, then enable gssftp.

cyrus-imap package uses Kerberos 5 if it also has the cyrus-sasl-gssapi package installed. The cyrus-sasl-gssapi package contains the Cyrus SASL plugins which support GSS-API authentication. Cyrus IMAP should function properly with Kerberos as long as the cyrus user is able to find the proper key in /etc/krb5.keytab, and the root for the principal is set to imap (created with kadmin).

cyrus-imap can be found in the dovecot package, which is also included in Fedora. This package contains an IMAP server but does not, to date, support GSS-API and Kerberos.

gserver uses a principal with a root of cvs and is otherwise identical to the CVS pserver.

foo.example.org → EXAMPLE.ORG

foo.example.com → EXAMPLE.COM

foo.hq.example.com → HQ.EXAMPLE.COM

krb5.conf. For example:

[domain_realm] .example.com = EXAMPLE.COM example.com = EXAMPLE.COM

kadmind (it is also your realm's admin server), and one or more KDCs (slave KDCs) keep read-only copies of the database and run kpropd.

krb5.conf and kdc.conf files are copied to the slave KDC.

kadmin.local from a root shell on the master KDC and use its add_principal command to create a new entry for the master KDC's host service, and then use its ktadd command to simultaneously set a random key for the service and store the random key in the master's default keytab file. This key will be used by the kprop command to authenticate to the slave servers. You will only need to do this once, regardless of how many slave servers you install.

#kadmin.local -r EXAMPLE.COMAuthenticating as principal root/admin@EXAMPLE.COM with password.kadmin:add_principal -randkey host/masterkdc.example.comPrincipal "host/host/masterkdc.example.com@EXAMPLE.COM" created.kadmin:ktadd host/masterkdc.example.comEntry for principal host/masterkdc.example.com with kvno 3, encryption type Triple DES cbc mode with HMAC/sha1 added to keytab WRFILE:/etc/krb5.keytab. Entry for principal host/masterkdc.example.com with kvno 3, encryption type ArcFour with HMAC/md5 added to keytab WRFILE:/etc/krb5.keytab. Entry for principal host/masterkdc.example.com with kvno 3, encryption type DES with HMAC/sha1 added to keytab WRFILE:/etc/krb5.keytab. Entry for principal host/masterkdc.example.com with kvno 3, encryption type DES cbc mode with RSA-MD5 added to keytab WRFILE:/etc/krb5.keytab.kadmin:quit

kadmin from a root shell on the slave KDC and use its add_principal command to create a new entry for the slave KDC's host service, and then use kadmin's ktadd command to simultaneously set a random key for the service and store the random key in the slave's default keytab file. This key is used by the kpropd service when authenticating clients.

#kadmin -p jimbo/admin@EXAMPLE.COM -r EXAMPLE.COMAuthenticating as principal jimbo/admin@EXAMPLE.COM with password.Password for jimbo/admin@EXAMPLE.COM:kadmin:add_principal -randkey host/slavekdc.example.comPrincipal "host/slavekdc.example.com@EXAMPLE.COM" created.kadmin:ktadd host/slavekdc.example.com@EXAMPLE.COMEntry for principal host/slavekdc.example.com with kvno 3, encryption type Triple DES cbc mode with HMAC/sha1 added to keytab WRFILE:/etc/krb5.keytab. Entry for principal host/slavekdc.example.com with kvno 3, encryption type ArcFour with HMAC/md5 added to keytab WRFILE:/etc/krb5.keytab. Entry for principal host/slavekdc.example.com with kvno 3, encryption type DES with HMAC/sha1 added to keytab WRFILE:/etc/krb5.keytab. Entry for principal host/slavekdc.example.com with kvno 3, encryption type DES cbc mode with RSA-MD5 added to keytab WRFILE:/etc/krb5.keytab.kadmin:quit

kprop service with a new realm database. To restrict access, the kprop service on the slave KDC will only accept updates from clients whose principal names are listed in /var/kerberos/krb5kdc/kpropd.acl. Add the master KDC's host service's name to that file.

# echo host/masterkdc.example.com@EXAMPLE.COM > /var/kerberos/krb5kdc/kpropd.acl

/var/kerberos/krb5kdc/.k5.REALM, either copy it to the slave KDC using any available secure method, or create a dummy database and identical stash file on the slave KDC by running kdb5_util create -s (the dummy database will be overwritten by the first successful database propagation) and supplying the same password.

kprop service. Then, double-check that the kadmin service is disabled.

kprop command will read (/var/kerberos/krb5kdc/slave_datatrans), and then use the kprop command to transmit its contents to the slave KDC.

# /usr/sbin/kdb5_util dump /var/kerberos/krb5kdc/slave_datatrans# kprop slavekdc.example.com

kinit, verify that a client system whose krb5.conf lists only the slave KDC in its list of KDCs for your realm is now correctly able to obtain initial credentials from the slave KDC.

kprop command to transmit the database to each slave KDC in turn, and configure the cron service to run the script periodically.

A.EXAMPLE.COM to access a service in the B.EXAMPLE.COM realm, both realms must share a key for a principal named krbtgt/B.EXAMPLE.COM@A.EXAMPLE.COM, and both keys must have the same key version number associated with them.

# kadmin -r A.EXAMPLE.COMkadmin: add_principal krbtgt/B.EXAMPLE.COM@A.EXAMPLE.COMEnter password for principal "krbtgt/B.EXAMPLE.COM@A.EXAMPLE.COM": Re-enter password for principal "krbtgt/B.EXAMPLE.COM@A.EXAMPLE.COM": Principal "krbtgt/B.EXAMPLE.COM@A.EXAMPLE.COM" created. quit # kadmin -r B.EXAMPLE.COMkadmin: add_principal krbtgt/B.EXAMPLE.COM@A.EXAMPLE.COMEnter password for principal "krbtgt/B.EXAMPLE.COM@A.EXAMPLE.COM": Re-enter password for principal "krbtgt/B.EXAMPLE.COM@A.EXAMPLE.COM": Principal "krbtgt/B.EXAMPLE.COM@A.EXAMPLE.COM" created. quit

get_principal command to verify that both entries have matching key version numbers (kvno values) and encryption types.

Dumping the Database Doesn't Do It

add_principal command's -randkey option to assign a random key instead of a password, dump the new entry from the database of the first realm, and import it into the second. This will not work unless the master keys for the realm databases are identical, as the keys contained in a database dump are themselves encrypted using the master key.

A.EXAMPLE.COM realm are now able to authenticate to services in the B.EXAMPLE.COM realm. Put another way, the B.EXAMPLE.COM realm now trusts the A.EXAMPLE.COM realm, or phrased even more simply, B.EXAMPLE.COM now trusts A.EXAMPLE.COM.

B.EXAMPLE.COM realm may trust clients from the A.EXAMPLE.COM to authenticate to services in the B.EXAMPLE.COM realm, but the fact that it does has no effect on whether or not clients in the B.EXAMPLE.COM realm are trusted to authenticate to services in the A.EXAMPLE.COM realm. To establish trust in the other direction, both realms would need to share keys for the krbtgt/A.EXAMPLE.COM@B.EXAMPLE.COM service (take note of the reversed in order of the two realms compared to the example above).

A.EXAMPLE.COM can authenticate to services in B.EXAMPLE.COM, and clients from B.EXAMPLE.COM can authenticate to services in C.EXAMPLE.COM, then clients in A.EXAMPLE.COM can also authenticate to services in C.EXAMPLE.COM, even if C.EXAMPLE.COM doesn't directly trust A.EXAMPLE.COM. This means that, on a network with multiple realms which all need to trust each other, making good choices about which trust relationships to set up can greatly reduce the amount of effort required.

service/server.example.com@EXAMPLE.COM

EXAMPLE.COM is the name of the realm.

domain_realm section of /etc/krb5.conf to map either a hostname (server.example.com) or a DNS domain name (.example.com) to the name of a realm (EXAMPLE.COM).

A.EXAMPLE.COM, B.EXAMPLE.COM, and EXAMPLE.COM. When a client in the A.EXAMPLE.COM realm attempts to authenticate to a service in B.EXAMPLE.COM, it will, by default, first attempt to get credentials for the EXAMPLE.COM realm, and then to use those credentials to obtain credentials for use in the B.EXAMPLE.COM realm.

A.EXAMPLE.COM, authenticating to a service in B.EXAMPLE.COMA.EXAMPLE.COM → EXAMPLE.COM → B.EXAMPLE.COM A.EXAMPLE.COM and EXAMPLE.COM share a key for krbtgt/EXAMPLE.COM@A.EXAMPLE.COM

EXAMPLE.COM and B.EXAMPLE.COM share a key for krbtgt/B.EXAMPLE.COM@EXAMPLE.COM

SITE1.SALES.EXAMPLE.COM, authenticating to a service in EVERYWHERE.EXAMPLE.COMSITE1.SALES.EXAMPLE.COM → SALES.EXAMPLE.COM → EXAMPLE.COM → EVERYWHERE.EXAMPLE.COM SITE1.SALES.EXAMPLE.COM and SALES.EXAMPLE.COM share a key for krbtgt/SALES.EXAMPLE.COM@SITE1.SALES.EXAMPLE.COM

SALES.EXAMPLE.COM and EXAMPLE.COM share a key for krbtgt/EXAMPLE.COM@SALES.EXAMPLE.COM

EXAMPLE.COM and EVERYWHERE.EXAMPLE.COM share a key for krbtgt/EVERYWHERE.EXAMPLE.COM@EXAMPLE.COM

DEVEL.EXAMPLE.COM and PROD.EXAMPLE.ORG DEVEL.EXAMPLE.COM → EXAMPLE.COM → COM → ORG → EXAMPLE.ORG → PROD.EXAMPLE.ORG DEVEL.EXAMPLE.COM and EXAMPLE.COM share a key for krbtgt/EXAMPLE.COM@DEVEL.EXAMPLE.COM

EXAMPLE.COM and COM share a key for krbtgt/COM@EXAMPLE.COM

COM and ORG share a key for krbtgt/ORG@COM

ORG and EXAMPLE.ORG share a key for krbtgt/EXAMPLE.ORG@ORG

EXAMPLE.ORG and PROD.EXAMPLE.ORG share a key for krbtgt/PROD.EXAMPLE.ORG@EXAMPLE.ORG

capaths section of /etc/krb5.conf, so that clients which have credentials for one realm will be able to look up which realm is next in the chain which will eventually lead to the being able to authenticate to servers.

capaths section is relatively straightforward: each entry in the section is named after a realm in which a client might exist. Inside of that subsection, the set of intermediate realms from which the client must obtain credentials is listed as values of the key which corresponds to the realm in which a service might reside. If there are no intermediate realms, the value "." is used.

[capaths]

A.EXAMPLE.COM = {

B.EXAMPLE.COM = .

C.EXAMPLE.COM = B.EXAMPLE.COM

D.EXAMPLE.COM = B.EXAMPLE.COM

D.EXAMPLE.COM = C.EXAMPLE.COM

}

A.EXAMPLE.COM realm can obtain cross-realm credentials for B.EXAMPLE.COM directly from the A.EXAMPLE.COM KDC.

C.EXAMPLE.COM realm, they will first need to obtain necessary credentials from the B.EXAMPLE.COM realm (this requires that krbtgt/B.EXAMPLE.COM@A.EXAMPLE.COM exist), and then use those credentials to obtain credentials for use in the C.EXAMPLE.COM realm (using krbtgt/C.EXAMPLE.COM@B.EXAMPLE.COM).

D.EXAMPLE.COM realm, they will first need to obtain necessary credentials from the B.EXAMPLE.COM realm, and then credentials from the C.EXAMPLE.COM realm, before finally obtaining credentials for use with the D.EXAMPLE.COM realm.

Note

A.EXAMPLE.COM realm can obtain cross-realm credentials from B.EXAMPLE.COM realm directly. Without the "." indicating this, the client would instead attempt to use a hierarchical path, in this case:

A.EXAMPLE.COM → EXAMPLE.COM → B.EXAMPLE.COM

/usr/share/doc/krb5-server/ directory.

/usr/share/doc/krb5-workstation/ directory.

man kerberos — An introduction to the Kerberos system which describes how credentials work and provides recommendations for obtaining and destroying Kerberos tickets. The bottom of the man page references a number of related man pages.

man kinit — Describes how to use this command to obtain and cache a ticket-granting ticket.

man kdestroy — Describes how to use this command to destroy Kerberos credentials.

man klist — Describes how to use this command to list cached Kerberos credentials.

man kadmin — Describes how to use this command to administer the Kerberos V5 database.

man kdb5_util — Describes how to use this command to create and perform low-level administrative functions on the Kerberos V5 database.

man krb5kdc — Describes available command line options for the Kerberos V5 KDC.

man kadmind — Describes available command line options for the Kerberos V5 administration server.

man krb5.conf — Describes the format and options available within the configuration file for the Kerberos V5 library.

man kdc.conf — Describes the format and options available within the configuration file for the Kerberos V5 AS and KDC.

firewalld provides a dynamically managed firewall with support for network zones to assign a level of trust to a network and its associated connections and interfaces. It has support for IPv4 and IPv6 firewall settings. It supports Ethernet bridges and has a separation of runtime and permanent configuration options. It also has an interface for services or applications to add firewall rules directly.

firewalld, which in turn uses iptables tool to communicate with Netfilter in the kernel which implements packet filtering.

firewall. The firewall icon will appear. Press enter once it is highlighted. The firewall-config tool appears. You will be prompted for your user password.

firewalld is dynamic rather than static because changes to the configuration can be made at anytime and are immediately implemented, there is no need to save or apply the changes. No unintended disruption of existing network connections occurs as no part of the firewall has to be reloaded.

man firewall-cmd(1). Permanent changes need to be made as explained in man firewalld(1).

firewalld is stored in various XML files in /usr/lib/firewalld/ and /etc/firewalld/. This allows a great deal of flexibility as the files can be edited, written to, backed up, used as templates for other installations and so on.

firewalld using D-bus.

firewalld and the iptables service are:

/etc/sysconfig/iptables while firewalld stores it in various XML files in /usr/lib/firewalld/ and /etc/firewalld/. Note that the /etc/sysconfig/iptables file does not exist as firewalld is installed be default on Fedora.

/etc/sysconfig/iptables while with firewalld there is no re-creating of all the rules; only the differences are applied. Consequently, firewalld can change the settings during run time without existing connections being lost.

firewalld to which zone an interface belongs. An interface's assigned zone can be changed by NetworkManager or via the firewall-config tool which can open the relevant NetworkManager window for you.

/etc/firewalld/ are a range of preset settings which can be quickly applied to a network interface. They are listed here with a brief explanation:

drop (immutable) block (immutable) IPv4 and icmp6-adm-prohibited for IPv6. Only network connections initiated from within the system are possible.

publicexternaldmzworkhomeinternaltrusted (immutable)firewalld is set to be the public zone.

firewalld.service(5) man page. The services are specified by means of individual XML configuration files which are named in the following format: service-name.xml.

firewall. The firewall icon will appear. Press enter once it is highlighted. The firewall-config tool appears. You will be prompted for your user password.

You can now view the list of services under the Services tab.

~]# ls /usr/lib/firewalld/services/

Files in /usr/lib/firewalld/services/ must not be edited. Only the files in /etc/firewalld/services/ should be edited.

~]# ls /etc/firewalld/services/

/etc/firewalld/services/. If a service has not be added or changed by the user, then no corresponding XML file will be found in /etc/firewalld/services/. The files /usr/lib/firewalld/services/ can be used as templates if you wish to add or change a service. As root, issue a command in the following format:

~]# cp /usr/lib/firewalld/services/[service].xml /etc/firewalld/services/[service].xml

You may then edit the newly created file. firewalld will prefer files in /etc/firewalld/services/ but will fall back to /usr/lib/firewalld/services/ should a file be deleted, but only after a reload.

firewalld has a so called direct interface, which enables directly passing rules to iptables, ip6tables and ebtables. It is intended for use by applications and not users. It is dangerous to use the direct interface if you are not very familiar with iptables as you could inadvertently cause a breach in the firewall. firewalld still tracks what has been added, so it is still possible to query firewalld and see the changes made by an application using the direct interface mode. The direct interface is used by adding the --direct option to firewall-cmd.

firewalld using D-BUS.

firewalld and the graphical user interface configuration tool firewall-config are installed by default but firewall-applet is not. This can be checked by running the following command as root:

~]# yum install firewalld firewall-config

firewalld, run the following commands as root:

~]# systemctl disable firewalld # systemctl stop firewalld

firewalld, first disable firewalld by running the following command as root:

~]# systemctl disable firewalld # systemctl stop firewalld

~]# yum install iptables-services

# touch /etc/sysconfig/iptables# touch /etc/sysconfig/ip6tables# systemctl start iptables# systemctl start ip6tables# systemctl enable iptables# systemctl enable ip6tables

firewalld, enter the following command as root:

~]# systemctl start firewalld

firewalld is running, enter the following command:

~]$ systemctl status firewalld

firewalld.service - firewalld - dynamic firewall daemon

Loaded: loaded (/usr/lib/systemd/system/firewalld.service; enabled)

Active: active (running) since Sat 2013-04-06 22:56:59 CEST; 2 days ago

Main PID: 688 (firewalld)

CGroup: name=systemd:/system/firewalld.service

~]$ firewall-cmd --state

running

firewalld, run the following command as root:

~]# yum install firewalld

To install the graphical user interface tool firewall-config, run the following command as root:

~]# yum install firewall-config

To install the optional firewall-applet, run the following command as root:

~]# yum install firewall-applet

firewall. The firewall icon will appear. Press enter once it is highlighted. The firewall-config tool appears. You will be prompted for your user password.

~]# firewall-config

The Firewall Configuration window opens. Note, this command can be run as normal user but you will then be prompted for the root password from time to time.

firewalld.

Note

IPv4 or IPv6.

IPv4 addresses to a single external address, start the firewall-config tool and select the network zone whose addresses are to be translated. Select the Masquerading tab and select the check box to enable the translation of IPv4 addresses to a single address.

IPv4 address, select the Forward to another port check box. Enter the destination IP address and port or port range. The default is to send to the same port if the port field is left empty. Click OK to apply the changes.

ICMP filter, start the firewall-config tool and select the network zone whose messages are to be filtered. Select the ICMP Filter tab and select the check box for each type of ICMP message you want to filter. Clear the check box to disable a filter. This setting is per direction and the default allows everything.

ICMP filter, start the firewall-config tool and then select mode from the drop-down selection menu labeled Current View. An button appears on the right hand side of the ICMP Filter tab.

firewalld application which is installed by default. You can verify that it is installed by checking the version or displaying the help output. Enter the following command to check the version:

~]$ firewall-cmd -V, --version

Enter the following command to view the help output:

~]$ firewall-cmd -h, --help

man firewall-cmd(1).

Note

--permanent option to all commands apart from the --direct commands (which are by their nature temporary). Note that this not only means the change will be permanent but that the change will only take effect after firewall reload, service restart, or after system reboot. Settings made with firewall-cmd without the --permanent option take effect immediately, but are only valid till next firewall reload, system boot, or firewalld service restart. Reloading the firewall does not in itself break connections, but be aware you are discarding temporary changes by doing so.

firewalld, enter the following command:

~]$ firewall-cmd --state

~]$ firewall-cmd --get-active-zones

public: em1 wlan0

~]$ firewall-cmd --get-zone-of-interface=em1

public

~]# firewall-cmd --zone=public --list-interfaces

em1 wlan0

This information is obtained from NetworkManager and only shows interfaces not connections.

~]# firewall-cmd --zone=public --list-all

public

interfaces:

services: mdns dhcpv6-client ssh

ports:

forward-ports:

icmp-blocks: source-quench

~]# firewall-cmd --get-service

cluster-suite pop3s bacula-client smtp ipp radius bacula ftp mdns samba dhcpv6-client dns openvpn imaps samba-client http https ntp vnc-server telnet libvirt ssh ipsec ipp-client amanda-client tftp-client nfs tftp libvirt-tls

This will list the names of the services in /usr/lib/firewalld/services/. Note that the configuration files themselves are named service-name.xml.

~]# firewall-cmd --get-service --permanent

~]$ nmcli -f NAME,DEVICES,ZONE con status

NAME DEVICES ZONE

my-little-wifi wlan0 home

VPN connection 1 wlan0 work

System em1 em1 --

-- means the interface is assigned to the default zone.

~]# firewall-cmd --panic-on

All incoming and outgoing packets will be dropped. Active connections will be terminated after a period of inactivity; the time taken depends on the individual session time out values.

~]# firewall-cmd --panic-off

After disabling panic mode, established connections might work again if panic mode was enabled for a short period of time.

~]$ firewall-cmd --query-panic && echo "enabled" || echo "Not enabled"

~]# firewall-cmd --reload

~]# firewall-cmd --complete-reload

This command should normally only be used in case of severe firewall problems. For example, if there are state information problems and no connection can be established but the firewall rules are correct.

~]# firewall-cmd --zone=public --add-interface=em1

To make this setting permanent, add the --permanent option and reload the firewall.

ifcfg-em1 configuration file, for example to add em1 to the work zone, as root use an editor to add the following line to ifcfg-em1:

ZONE=workNote that if you omit the

ZONE option, or use ZONE=, or ZONE='', then the default zone will be used.

/etc/firewalld/firewalld.conf and edit the file as follows:

# default zone # The default zone used if an empty zone string is used. # Default: public DefaultZone=home